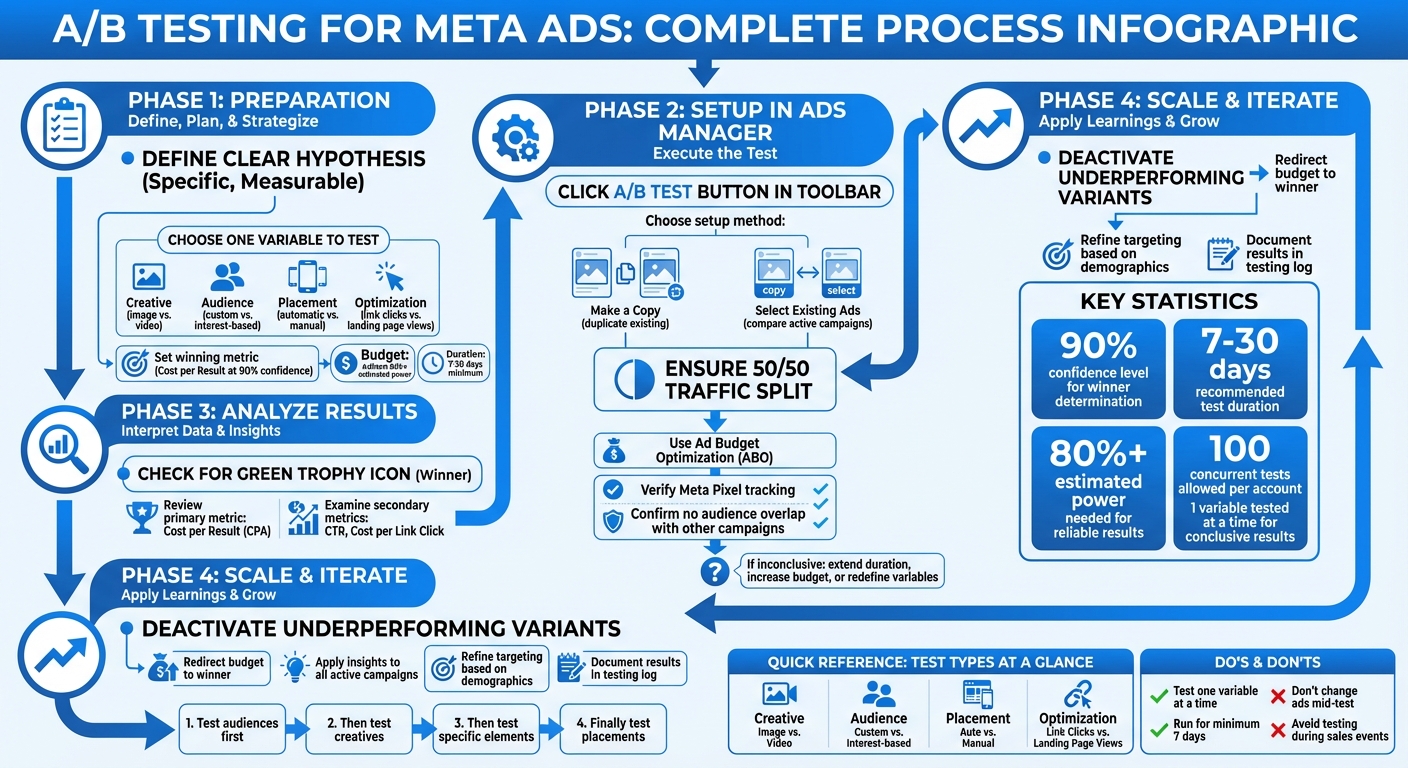

Running Meta Ads without executing strict A/B tests guarantees wasted ad spend and stagnant ROAS. A/B testing completely eliminates guesswork by scientifically comparing two ad variations while strictly isolating a single variable. Executing a disciplined testing framework ensures you mathematically identify exactly which images, headlines, or audiences drive the lowest Cost Per Acquisition (CPA).

Key Takeaways for Meta Ads A/B Testing:

- What It Is: A/B testing mathematically compares two ad versions by changing exactly one isolated element (e.g., testing a static image vs. a 9:16 vertical video).

- Why It Matters: It definitively proves which creative or audience lowers your CPA and boosts your total conversions using hard data.

- How to Start: You must establish a clear hypothesis, isolate a single variable, and deploy Meta’s native A/B testing tool to prevent audience overlap and ensure clean data.

- Budget & Timing: You must run tests strictly for 7–30 days with a sufficient daily budget to exit the algorithmic learning phase.

- Analyzing Results: You must evaluate the ultimate winner based strictly on Cost per Result, utilizing secondary metrics like CTR only for contextual insights.

Deploying this structured testing process is the only way to scale your Meta Ads profitably. Let’s explore exactly how to set up, execute, and analyze A/B tests inside Meta Ads Manager.

A/B Testing Process for Meta Ads: 4-Step Framework

How To A/B Test Your Meta Ads Creatives

sbb-itb-d8a1e45

Preparing Your Meta Ads A/B Test Architecture

Preparation dictates whether your Meta Ads test delivers actionable revenue insights or simply burns budget. You must explicitly define your hypothesis, isolate your variables, and verify your technical tracking before launching any split test inside Meta Ads Manager.

Setting Strict Objectives and Isolating Variables

You must convert broad marketing questions into highly specific, measurable hypotheses. Formulating a hypothesis like, "Will optimizing for Purchases reduce my CPA compared to optimizing for Landing Page Views?" provides a definitive mathematical target. A vague question like "Which ad works better?" guarantees inconclusive results.

You must rigorously test exactly one variable at a time. Whether you are testing creative formats (video vs. carousel), audience segments (1% Lookalike vs. Broad Targeting), or placements (Advantage+ vs. Manual), introducing a second variable destroys the test's integrity. Meta explicitly states: "You'll have more conclusive results for your test if your ad sets are identical except for the variable that you're testing".

You must pre-determine your winning metric before launch. For direct-response campaigns, this is exclusively Cost per Purchase or Cost per Lead. Meta’s native A/B testing tool utilizes a strict 90% confidence level to definitively declare a winner.

Mandatory Technical Setup Requirements

Flawless tracking is strictly required before initiating an A/B test. You must verify that your Meta Pixel and Conversions API (CAPI) are accurately tracking deduplicated events. If you are optimizing for bottom-funnel events like Purchases, broken tracking invalidates the entire test.

You must ensure absolute audience exclusivity. If a separate, live campaign targets the exact same audience as your A/B test, audience overlap will severely contaminate your data. To guarantee a perfect 50/50 traffic split and maintain absolute control over variant spending, you must utilize Ad Budget Optimization (ABO) rather than Campaign Budget Optimization (Advantage+ CBO).

Strict Budget and Timing Guidelines

You must run Meta A/B tests for an absolute minimum of 7 days to normalize weekly behavioral fluctuations, capping the test at a maximum of 30 days. High-ticket products or complex B2B services require extending the test to 14 days to accurately capture delayed attribution cycles.

Your daily budget must be large enough to achieve at least 80% estimated statistical power, ensuring the algorithm can exit the learning phase (typically 50 conversions per week). You must completely avoid launching critical A/B tests during massive external events like Black Friday, as hyper-competitive auction volatility will heavily distort your baseline data.

How to Execute A/B Tests in Meta Ads Manager

Deploying the Native A/B Test Tool

Executing a flawless split test requires utilizing Meta's native A/B testing feature to mathematically prevent audience overlap. Open Ads Manager, click the A/B Test button in the primary toolbar, and select your setup architecture:

- Method 1: Make a Copy: This method duplicates an existing, stable ad set and forces you to alter exactly one element (e.g., swapping the image). This is the optimal method for testing a new creative against a proven control baseline.

- Method 2: Select Existing Ads: This method forces two currently active campaigns or ad sets into a head-to-head comparison. This is highly effective for determining which of your current setups is mathematically superior.

After selecting your method, you must assign a specific winning metric (e.g., Cost per Purchase). Meta's algorithm will automatically enforce a strict 50/50 audience split, guaranteeing no single user is exposed to both variations.

High-Impact Test Types

Creative Testing: You must isolate specific visual or copy elements. Testing a native UGC video against a highly produced graphic image is a standard creative test. Every other element—headline, primary text, and audience—must remain perfectly identical.

Audience Testing: You must test distinct targeting strategies to locate cheaper CPMs. Comparing a 1% Purchase Lookalike audience directly against a completely Broad audience (no interest targeting) is highly effective. You must not test microscopically similar audiences (e.g., ages 25–30 vs. 26–31) as the overlap will render the test useless.

Placement Testing: You must determine if manual restrictions lower your CPA. Testing Advantage+ Placements (Automatic) against manual Instagram Reels placements requires keeping the ad creative and audience completely identical.

Strict Testing Best Practices

You must absolutely refuse to edit the ad sets, budgets, or creatives once the A/B test is active. Meta’s engineering documentation explicitly warns:

You'll have more conclusive results for your test if your ad sets are identical except for the variable that you're testing.

You must enforce strict budget parity across all test variants. If your test suffers from severe under-delivery, you must immediately expand your audience size to feed the algorithm sufficient impressions.

Analyzing A/B Test Data and Declaring a Winner

Meta Ads Manager definitively identifies the winning variant by displaying a green trophy icon upon test completion. The algorithm declares this winner based exclusively on the lowest cost per result for your pre-selected metric (e.g., Cost per Purchase), guaranteeing your decision is tied directly to ROAS.

Evaluating Primary and Secondary Metrics

While Cost per Result (CPA) must remain your absolute primary focus, analyzing secondary metrics like CTR (Click-Through Rate) and CPC (Cost per Link Click) diagnoses exactly why a variant won. Meta requires a 90% statistical confidence level to prevent false positives. If Variant A generates a lower Cost per Purchase but Variant B generates a massively higher CTR, this explicitly indicates that Variant B's creative is superior, but your landing page is failing to convert that specific traffic.

Decoding the Experiments Dashboard

You must navigate to the Experiments section within Meta Business Suite for deep statistical analysis. This dashboard provides a strict side-by-side performance comparison. You must heavily scrutinize the "chance of getting the same results" percentage; a high confidence rating (e.g., 92%) proves the leading variant is mathematically superior, not just lucky. You must allow tests to run for the full 7-day minimum to stabilize this confidence score.

Diagnosing Inconclusive Test Results

If Meta fails to declare a mathematical winner, your test architecture was flawed. Inconclusive results almost exclusively occur due to insufficient budgets, microscopically small audiences, or testing variables that are virtually identical. Meta’s documentation explicitly states:

If you test 18-20 year old women... against 20-22 year old women... your audiences may be too similar to get conclusive results.

If your test returns inconclusive, you must execute a larger test. You must dramatically increase the audience size, double the daily budget to force algorithmic learning, and test drastically different variables (e.g., entirely different video concepts rather than just changing the background color of an image).

Scaling Campaigns with Winning A/B Test Data

You must immediately weaponize your test results. You must instantly pause the losing ad variants and violently shift that liberated budget directly into the winning ad set to scale your ROAS.

Deploying the Winning Variant

The winning variant must become your new baseline control for all future tests. If a UGC video drastically outperformed high-end lifestyle photography, you must immediately pivot your creative strategy to produce more UGC variations. You must extract the demographic data from the winning test; if users aged 35-44 generated the lowest CPA, you must launch dedicated scaling campaigns explicitly targeting that age bracket.

Establishing a Continuous Testing Loop

You must permanently log every hypothesis and result in an external spreadsheet, as Meta periodically clears historical experiment data. A rigorous, continuous testing schedule dictates long-term profitability:

- Phase 1: Test broad audiences to isolate your most profitable demographic.

- Phase 2: Test macro-creative formats (Video vs. Carousel) against that winning audience.

- Phase 3: Test micro-creative elements (Video Hook A vs. Video Hook B) within the winning format.

- Phase 4: Test landing page variations against the ultimate winning ad.

Scaling Meta Ads with Surfside PPC

If you require elite execution, Surfside PPC provides comprehensive Meta Ads management. We engineer strict A/B testing hypotheses, execute flawless technical setups, and ruthlessly scale winning campaigns to maximize your ROI. Whether you need full-service management or a high-level strategic consultation, Surfside PPC delivers the exact framework required to dominate your market.

Conclusion: Stop Guessing, Start Testing

A/B testing transforms Meta Ads from a volatile slot machine into a predictable revenue engine. You must start with a mathematically measurable hypothesis, aggressively isolate a single variable, and enforce a minimum 7-day testing window to guarantee clean data. You must evaluate the ultimate winner based exclusively on your target Cost per Result.

This continuous feedback loop is the only way to scale. Identifying a winning audience naturally leads to testing new creatives for that specific group, and discovering a winning creative format naturally leads to testing specific visual hooks. The startup Heights utilized strict Meta A/B testing to validate copy angles before altering their website, resulting directly in a massive 29.7% increase in conversion rates. Without validating the data first, earlier untested changes had actively damaged their sales.

You must maintain relentless consistency. Log your data, stick to a strict testing schedule, and ensure every single ad dollar spent is actively working to identify a more profitable variant.

Frequently Asked Questions

How can I choose the best variable to test in my Meta Ads campaign?

You must select a variable that directly impacts your bottom-line CPA. Formulate a highly specific hypothesis, such as: Will utilizing a 3-second UGC video hook lower my Cost per Purchase compared to a static product image? This guarantees your test generates actionable financial data.

You must strictly isolate exactly one variable at a time—whether it’s the audience targeting, the ad creative, or the delivery optimization. If you alter both the video and the headline simultaneously, you completely destroy your ability to attribute the performance shift to a specific element. Ensure your daily budget is large enough to push both variations out of the algorithmic learning phase.

What can I do if my A/B test results don’t show a clear winner?

If Meta returns an inconclusive result, you must immediately audit your test architecture. First, verify that you did not contaminate the test by altering multiple variables simultaneously. Second, confirm that your audience size was massive and completely free of overlap; micro-targeting destroys statistical significance.

If the setup was flawless, an inconclusive result simply means the variables were too similar or the budget was too restrictive to exit the learning phase. You must immediately launch a new test with drastically different variables (e.g., a completely different video concept) and double the daily budget to force the algorithm to declare a mathematical winner.

How do I ensure my A/B test for Meta Ads runs long enough to get accurate results?

You must enforce strict duration parameters to guarantee algorithmic accuracy:

- You must run the test for an absolute minimum of 7 days. This provides the Meta delivery algorithm sufficient time to stabilize CPMs and normalize weekend vs. weekday behavioral shifts. Terminating early guarantees false positives.

- You must cap the test at 30 days. Meta physically prevents native A/B tests from exceeding this window.

- You must secure sufficient conversion volume. If your audience is too small or your budget is too low to generate roughly 50 optimization events per week, the algorithm will fail to declare a statistically significant winner.

- You must never touch the campaign while it runs. Do not make manual edits based on Day 2 performance; you must wait the full duration for the CPA to stabilize.

0 comments