A/B testing is a mathematical process used to optimize Pay-Per-Click (PPC) campaigns by comparing two variations of an ad, targeting parameter, or landing page to determine which generates superior performance metrics. This data-driven methodology eliminates subjective guesswork from digital marketing. Executing a successful A/B test requires establishing strict baselines, defining actionable hypotheses, and running split traffic until statistical significance is achieved.

High-Impact Areas for Google Ads A/B Testing:

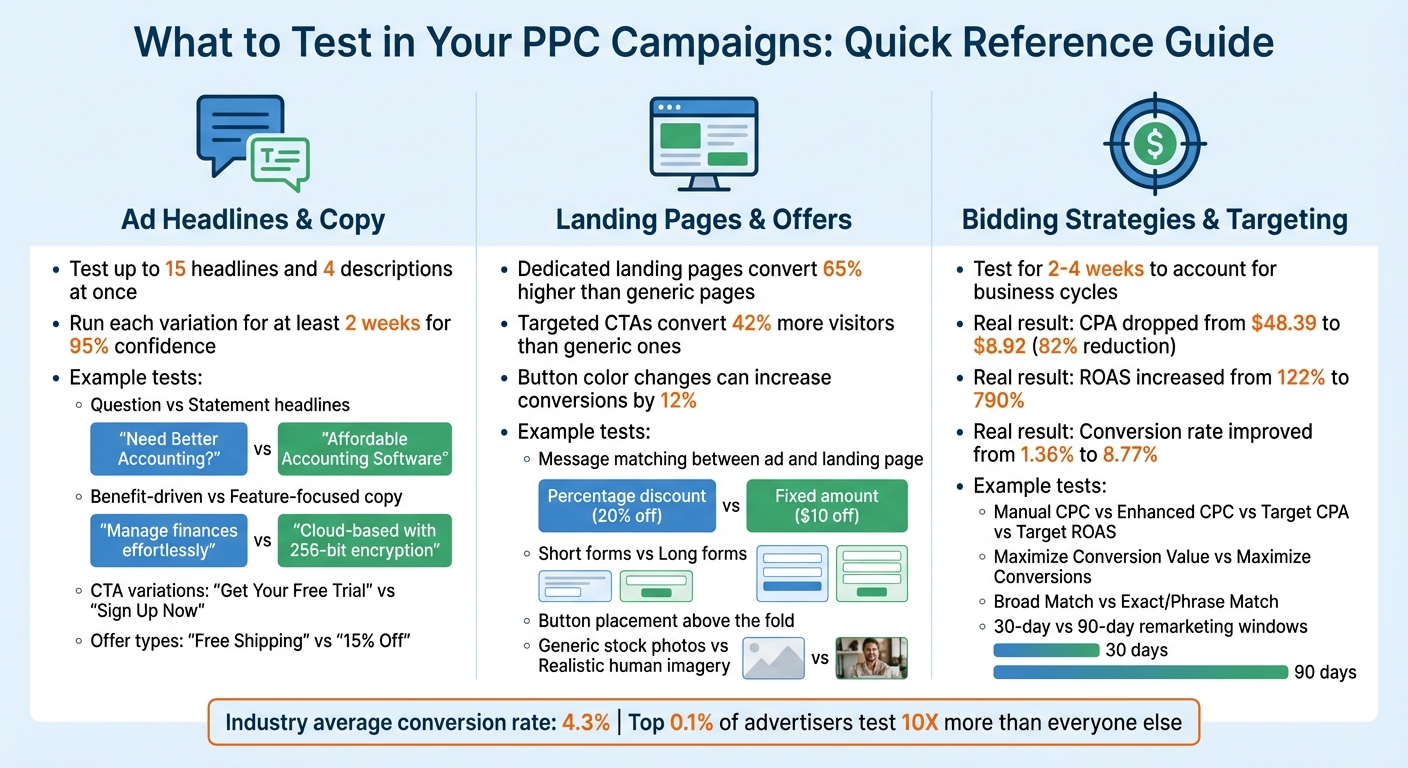

- Ad Headlines and Copywritng: Systematically testing distinct headlines, value propositions, and Call-to-Action (CTA) verbs within Responsive Search Ads (RSAs).

- Landing Page Architecture: Optimizing above-the-fold layouts, lead capture form lengths, and specific promotional offers to maximize conversion rates.

- Bidding Algorithms and Targeting: Comparing manual CPC bidding against automated Smart Bidding models, or testing exact match keywords against broad match discovery campaigns.

Executing continuous, single-variable tests ensures that every optimization decision is backed by reliable data. Achieving minor incremental gains in Click-Through Rate (CTR) and Conversion Rate compounds over time, driving massive improvements in overall Return on Investment (ROI). A/B testing remains a mandatory practice for PPC managers, ensuring human messaging perfectly aligns with algorithmic delivery.

A/B Testing Frameworks: Saving Capital in Your PPC Strategy

sbb-itb-d8a1e45

Core Variables to A/B Test in Your Google Ads Campaigns

PPC A/B Testing Elements Comparison Chart

Executing a profitable A/B testing strategy requires isolating high-impact variables within a Google Ads account. Prioritizing experiments on ad headlines, landing page layouts, and algorithmic bidding strategies yields the highest mathematical impact on ROI.

Testing Responsive Search Ad Headlines and Copy

Responsive Search Ads (RSAs) demand rigorous A/B testing to identify the highest-converting copy combinations. Advertisers must input up to 15 unique headlines and 4 descriptions to allow the Google Ads algorithm to test benefit-driven messaging against feature-focused text. Structuring an A/B test comparing a question-based headline ("Need Better Accounting?") against a definitive statement ("Affordable Accounting Software") rapidly identifies precise audience intent.

Testing specific Calls-to-Action (CTAs) and offer types for a minimum of two weeks ensures the CTR data reaches a 95% statistical confidence level. Advertisers should compare urgency-based CTAs ("Limited Time Offer") against low-friction CTAs ("Get Your Free Trial"). Secondary variables, such as display URL configurations and specific promotional framing ("Free Shipping" vs. "15% Off"), provide further optimization opportunities to lower Cost Per Click (CPC) through improved Ad Relevance.

Optimizing Landing Page Conversion Rates

Dedicated landing pages generate a 65% higher conversion rate than generic website homepages by maintaining strict message match with the triggering ad copy. Landing page A/B testing must focus on verifying that the primary H1 tag identically mirrors the exact Google Ads keyword the user clicked. Presenting the promised offer immediately "above the fold" prevents mobile user drop-off and secures higher Quality Scores.

Testing structural landing page elements directly impacts the total Cost Per Acquisition (CPA). Reducing lead capture form fields generally increases total lead volume, while longer forms qualify higher-intent prospects. Implementing highly specific, targeted CTA buttons increases visitor action by 42% compared to generic "Submit" buttons. Modifying button contrast colors, updating hero imagery with authentic photography, and ensuring sub-2-second mobile load speeds are foundational A/B tests for CRO.

Testing Smart Bidding Algorithms and Audience Targeting

A/B testing Google Ads bidding algorithms determines the most efficient method for scaling ad spend. Advertisers must split-test Manual CPC baseline campaigns against automated Smart Bidding models, such as Target CPA (tCPA) or Target ROAS (tROAS). For e-commerce campaigns, comparing "Maximize Conversion Value" against standard "Maximize Conversions" reveals which machine learning model drives higher gross revenue.

Audience and keyword targeting tests reveal untapped market share. A standard test involves comparing Exact Match keyword groups against Broad Match campaigns paired with automated bidding. In January 2026, a nutrition brand tested aggressive bid modifications and new broad match keywords, resulting in an 82% reduction in CPA (from $48.39 to $8.92) and an explosive ROAS increase from 122% to 790%. Bidding and targeting experiments require a strict 2–4 week testing window to account for algorithmic learning phases and standard business cycle fluctuations.

| PPC Element to Test | Example Variant A (Control) | Example Variant B (Experiment) |

|---|---|---|

| Headline Type | "Need Better Accounting?" (Question) | "Affordable Accounting Software" (Statement) |

| Main Offer | "Free Shipping on All Orders" | "Save 15% Today Only" |

| CTA Verb | "Sign Up Now" | "Get Your Free Trial" |

| Copy Focus | "Manage finances effortlessly" (Benefit) | "Cloud-based with 256-bit encryption" (Feature) |

| Bidding Strategy | Target CPA | Target ROAS |

How to Configure a Statistically Significant A/B Test

Configuring a Google Ads A/B test correctly prevents data corruption and ensures capital is not wasted on false positives. A structured experiment requires a singular hypothesis, an isolated variable, and a designated primary KPI.

Defining the Scientific Hypothesis and Primary Metric

Every A/B test must originate from a SMART goal (Specific, Measurable, Attainable, Relevant, Time-bound) tied to a primary business metric. Establishing a clear hypothesis provides a framework for analyzing the final data. A proper hypothesis follows the formula: "Based on historical data, we believe that moving the CTA button above the fold for mobile users will cause an increase in lead volume. We will validate this by measuring a positive change in the Landing Page Conversion Rate."

Selecting a single primary metric prevents analytical confusion when reviewing test results. Lead generation tests must focus on Conversion Rate or CPA, while e-commerce tests prioritize ROAS or Average Order Value (AOV).

"Revenue per user is particularly useful for testing different pricing strategies or upsell offers. It's not always feasible to directly measure revenue, especially for B2B experimentation."

– Alex Birkett, Co-founder, Omniscient Digital

Guaranteeing Equal Traffic Splitting and Statistical Significance

The Google Ads "Experiments" feature is the mandatory tool for ensuring an unbiased 50/50 traffic split between the Control and Variant campaigns. Running both variants simultaneously eliminates external timing biases, such as seasonality or day-of-week fluctuations. Cookie-based traffic splitting is required for audiences exceeding 10,000 users to ensure the same user does not see conflicting ad variations.

Statistical significance is achieved when a test registers at least 100 conversions per variant or aggregates 1,000–2,000 impressions for top-of-funnel CTR tests. Stopping an A/B test prematurely guarantees inaccurate data; industry data shows over 60% of paid media tests fail due to insufficient runtime. Advertisers must aim for a 95% confidence level, verifying there is only a 5% mathematical probability that the performance delta occurred by random chance.

Monitoring the Experiment Without Disrupting the Algorithm

Altering the base campaign or the active experiment during a live test instantly corrupts the data and resets the Google Ads algorithmic learning phase. Marketers must monitor real-time performance using the Google Ads dashboard without executing manual bid or copy changes until the test concludes. Analyzing secondary indicators via heatmap tools or session recordings provides qualitative context for quantitative drop-offs.

A/B tests must run in full 7-day increments (e.g., exactly 14 or 21 days) to standardize weekend versus weekday traffic volume. Once the Google Ads "Experiment Power" score confirms statistical significance, advertisers utilize the "Auto-Apply" feature to seamlessly migrate 100% of the campaign budget to the mathematically proven winning variant.

Strict Best Practices for Google Ads A/B Testing

Adhering to strict scientific testing principles guarantees that Google Ads experiments produce repeatable, scalable growth. Isolating variables and documenting outcomes prevents marketers from executing redundant tests.

The Single Variable Isolation Rule

Testing one specific variable at a time is the absolute most critical rule of A/B testing. Modifying the landing page layout, ad headline, and bidding strategy simultaneously destroys data integrity, making it impossible to attribute a performance increase to a specific change. While 58% of enterprise companies utilize A/B testing, only teams strictly enforcing single-variable isolation generate reliable, scalable insights.

Utilizing the Google Ads Experiments Dashboard

The native Google Ads Experiments tool completely automates the mathematical heavy lifting of traffic splitting and significance tracking. Global marketplace Fiverr aggressively utilized the Experiments dashboard to test complex landing page combinations, saving their internal performance team over 3 hours of manual tracking per week. Similarly, design platform Canva verified a 60% increase in total conversions by relying exclusively on Google's automated experiment tracking.

Building a Historical Testing Ledger

Documenting every executed hypothesis, test duration, and final statistical outcome builds an invaluable proprietary knowledge base for a digital marketing team. Maintaining a centralized testing ledger prevents the accidental repetition of failed tests and identifies overarching consumer behavior trends. Elite advertisers test at 10x the volume of average accounts entirely because they maintain rigorous historical documentation of all past performance deltas.

Common PPC A/B Testing Failures to Avoid

Executing A/B tests with flawed methodology guarantees wasted ad spend and trains the Google Ads bidding algorithm on inaccurate conversion data.

Multivariate Confusion

Testing multiple unlinked elements simultaneously causes "multivariate confusion." If a marketer changes a headline to focus on price while simultaneously changing the landing page to feature a video, it is mathematically impossible to determine which variable drove a subsequent 15% increase in CPA. Strict variable isolation is the only defense against corrupted test data.

The "Peeking Problem" and Premature Termination

Terminating an A/B test before reaching a 95% statistical confidence interval (p < 0.05) invalidates the entire experiment. The "peeking problem" occurs when advertisers check daily data and prematurely declare a winner based on early, random traffic fluctuations. Pre-calculating the required sample size based on historical conversion volume forces discipline and ensures the test runs to actual completion.

Testing Vanity Metrics Over Bottom-Line Revenue

Designing A/B tests exclusively to increase CTR or impression share frequently damages actual business profitability. A clickbait headline will reliably win a CTR test, but it will simultaneously destroy the campaign's ROAS by driving unqualified, non-converting traffic to the landing page. All A/B test hypotheses must tie directly back to primary revenue metrics like CPA, Cost Per Lead (CPL), or Gross Margin.

Scaling ROI with Surfside PPC Experimentation

Surfside PPC operationalizes advanced A/B testing frameworks to systematically lower acquisition costs for our clients. We remove the technical friction of managing split tests, allowing businesses to capitalize on mathematically proven campaign optimizations.

Professional Google Ads Management

Surfside PPC enforces strict single-variable testing methodologies within our full-service Google Ads management packages. We configure exact 50/50 traffic splits to identify initial winners, and utilize safe 70/30 scaling splits when introducing aggressive new copy variants against established control campaigns. Our rigorous data analysis prevents the premature termination of experiments, protecting your budget from false positives.

Advanced PPC Training and Strategy Courses

For in-house marketing teams, the Surfside PPC educational courses provide comprehensive blueprints on isolating variables and configuring the Google Ads Experiments dashboard. We teach marketers exactly how to structure hypotheses, calculate required sample sizes, and translate raw click data into scalable, high-converting ad copy.

Conclusion: Compounding Growth Through Experimentation

A/B testing is an iterative mathematical process designed to generate compounding growth, not a hunt for a singular "silver bullet" optimization. Consistently improving a campaign's conversion rate by just a few percentage points every quarter drastically transforms the long-term ROI of an ad account. Routine testing every 30 to 60 days prevents campaign fatigue and guarantees messaging remains highly relevant to shifting consumer intent.

Automated bidding algorithms require high-quality human inputs to function effectively. A/B testing bridges the gap between algorithmic delivery and human psychology, ensuring that the highest-converting copy and visual hierarchy are fed directly into the Google Ads machine learning engine.

A/B Testing FAQs

What is the most impactful variable to A/B test first in PPC?

The primary headline of your ad copy and the main Call-to-Action (CTA) on your landing page are the highest-impact variables to test first. Because the headline dictates your CTR (and heavily influences Quality Score), and the CTA dictates your Conversion Rate, testing these two distinct elements independently yields the fastest, most noticeable improvements to your CPA.

How do I test campaigns with low conversion volume?

Campaigns lacking the volume to reach 100 conversions quickly must utilize secondary metrics for statistical significance. Instead of testing for CPA, optimize the test to measure "Add to Cart" events, lead form initiation, or top-of-funnel CTR. Extending the test duration to 30 or 45 days is also necessary to aggregate enough data to reach a 95% confidence interval on low-traffic accounts.

How do I implement a winning A/B test safely?

Implement winning variations utilizing the native "Auto-Apply" feature within the Google Ads Experiments dashboard to ensure a seamless transition of campaign history. For manual updates, never overwrite the existing control asset; always pause the loser and enable the proven variant to maintain historical Quality Score data and prevent resetting the algorithmic learning phase.

0 comments